How the AI Detection Arms Race Is Pushing for Better Assessment Design

Right now, higher education is stuck in a loop that most instructors and learners can feel.

Around and around it goes.

This cycle wasn’t producing better outcomes. It was producing escalation. And more and more, it was consuming time, money, and trust without providing us stronger evidence of learning.

At the Workforce Applied Research Center, we think the most important shift happening right now is this: education is starting, unevenly but clearly, to move from AI detection as enforcement toward assessment design as evidence. You can see it in policy decisions and faculty guidance like these:

That shift is overdue.

The Problem Isn’t AI, It’s Measurement

It helps to understand about what AI detection is actually doing. In most cases, detection tools don’t measure learning. They don’t measure mastery. They don’t measure reasoning. What they try to measure is the likelihood that a piece of text looks machine-generated.

That’s a narrow target.

And while detection might be a signal it isn’t evidence of capability. This difference matters more than most policy debates want to admit.

Let’s be honest. AI didn’t invent shortcut-seeking behavior. Long before AI entered the classroom, learners found ways to work around assessments that rewarded polished artifacts more than real capability. Academic outsourcing has taken plenty of familiar forms:

• turning in recycled work from prior cohorts or shared repositories

• relying on templates and “approved” exemplars instead of original reasoning

• outsourcing big parts of assignments to tutors or third-party help

• buying completed work through paper mills and contract cheating services

• using spin tools and paraphrasers to mask reuse instead of improving understanding

• treating coursework like a compliance deliverable, not a capability-building exercise

• optimizing for credential requirements instead of real performance readiness

We tolerated a lot of this because the system still seemed functional.

AI didn’t create the impulse to outsource. It just lowered the cost, increased the speed, and made the brittleness of many assessment formats impossible to ignore.

In doing so, it exposed a deeper fragility: many assessments were never designed to hold up in a world where powerful tools are always within reach.

Signals Prompt Questions, Evidence Provides Answers

Workforce ARC’s position can be stated simply:

Signals are indicators that something might be true. It can point us toward an issue, but it’s often noisy, uncertain, and not strong enough to stand on its own.

Evidence is what can support a conclusion under scrutiny. It’s what holds up when questions are asked, reasoning is tested, and capability has to be demonstrated.

AI detector outputs belong in the signals category. They may raise a question. They may start a conversation. They may suggest that an instructor should look more closely. But they can’t carry the weight of high-stakes academic judgment on their own, because they are probabilistic, opaque, and unstable across contexts.

In contrast, evidence is what can be explained, defended, and evaluated clearly.

If higher education wants lasting academic trust, it has to be built on evidence of capability, not on surveillance of unreliable signals.

Why the Detection Arms Race Can’t Restore Trust

AI detection also runs into a basic long-term problem: language isn’t a fingerprint. Already, modern AI systems can produce writing that is often difficult to distinguish from typical human writing. And the boundary is getting blurrier, not clearer, because humans are also using AI to shape and refine their own style. That’s why so many of the supposed “tells” people point to are unreliable.

Some instructors claim that em-dashes are a dead giveaway of AI-generated text. But em-dashes have been used for decades by countless human writers. They’re a style choice, not a signature. Others point to tattle-tale words like “bespoke” as if vocabulary itself proves authorship. But sometimes there simply aren’t many clean synonyms for a word like “custom,” and different writers reach for different terms.

When detection depends on these kinds of shaky heuristics, it can’t deliver durable trust. Even if detection tools improve, the underlying dynamic stays unstable.

Detection invites evasion.

Evasion invites escalation.

Escalation invites mistrust.

The institution becomes more focused on policing the production process, while learners become more focused on avoiding suspicion instead of demonstrating understanding. This isn’t because either side is acting in bad faith. It’s because the assessment itself hasn’t changed. When the task being defended is brittle, enforcement becomes the default response.

But enforcement can’t substitute for measurement.

The deeper question isn’t, “Can we catch AI use?”

The deeper question is, “Does this assessment actually give us evidence of capability?”

Adult Learning Makes the Issue Even Clearer

The workplace is largely outcome-based. Employers care about whether someone can do the work, solve real problems, and explain their decisions. Few, if any, employers routinely run AI-writing detectors on ordinary workplace memos or deliverables.

In most workplaces, the main concern isn’t authorship. It’s data security, compliance, and whether the work holds up.

What employers tend to care about more is quality, accuracy, and accountability, not whether a draft began with AI.

In other words, what matters most is whether the performance holds up under scrutiny, not whether every sentence was produced in isolation.

This challenge becomes even sharper when we think about adult learning and workforce education.

Adult learners are expected to exercise judgment, autonomy, and tool fluency. In real professional environments, competence includes:

• choosing the right tools

• verifying outputs

• explaining decisions

• adapting solutions to context

• owning results under scrutiny

In these settings, tool use isn’t contamination. It’s part of the capability.

The question isn’t whether learners will use AI, it’s whether our assessments can tell the difference between superficial output and real competence, even when assistance is available.

Extended agency isn’t the enemy of integrity. It’s the point of adult education.

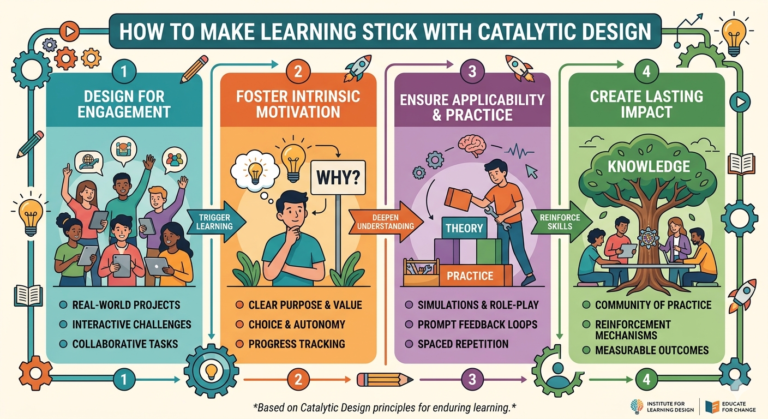

The Path Forward: Assessment as Capability Evidence

The durable response doesn’t begin with detectors or policy add-ons.

It begins with assessment design.

And importantly, many learning institutions are already starting to move in this direction. Teaching centers, faculty leaders, and assessment teams are experimenting with more authentic, evidence-rich approaches. That momentum deserves recognition, and it’s worth continuing.

If we want evidence of learning, we need tasks that require learners to demonstrate capability in observable ways, such as:

• explaining reasoning, not just producing answers

• applying knowledge in unfamiliar scenarios

• defending choices and tradeoffs

• showing iterative work over time

• doing live problem-solving where appropriate

There are practical methods that can help make these assessments more reliable:

• oral defenses or short recorded explanations

• staged drafts and feedback cycles

• decision logs that make judgment visible

• transfer tasks that apply learning in new contexts

• clear rubrics focused on performance, not formatting

Where process matters, process should be assessed directly.

Where judgment matters, judgment should be visible.

The goal isn’t permissiveness. The goal is rigor that holds up.

Less policing. More measurement.

Less artifact control. More capability clarity.

The next step is not to perfect detection, but to keep building assessments that produce real evidence of capability, even in a tool-rich world.

A Timely Shift Worth Accelerating

Across higher education, early signs suggest that reliance on AI detection as proof is being downgraded, while attention is turning toward authentic assessment and evidence-rich evaluation.

AI isn’t going away. Tool use is part of the environment now.

The question is whether higher education will keep investing mainly in enforcement, or whether it will invest in assessment systems that can actually measure what institutions say they value.

The future of academic trust won’t be secured by increasingly sophisticated detection.

It will be secured by designing assessments that make capability visible.

At Workforce ARC, we believe that’s the work ahead.

A Practical Next Step

If you’re an instructor or program leader, you don’t need to redesign everything overnight. Start small.

Pick one assessment this term and ask a simple question: Does this task produce evidence of capability, or does it mostly reward a polished artifact?

Then try one evidence-rich shift:

• add a short explanation or defense component

• require transfer into a new scenario

• build in staged drafts or reflection

• focus your rubric on performance and judgment

Signals will always prompt questions.

But evidence is what provides answers.

That’s where trust will be rebuilt, moving higher education from suspicion to capability-based confidence.